Home technology artificial-intelligence google's next gen TPU is here and it will leave you in awe

Artificial Intelligence

CIO Bulletin

2017-05-23

Google I/O, Google’s annual Developers conference always has a surprise in store. It is waited on with baited breaths by the whole Tech community and it does not disappoint them. Being one of the biggest and flashiest events (and one of the most kick-ass might I add) in the Silicon Valley, Google reveals it’s most ambitious and awe inspiring projects here.

Last year it announced the launch of Tensor Processing Unit (TPU), Google’s very own hardware to train AI. This year they are just dropping the mother lode on us. First let me confirm what most of you want to know first. Yes, Google is releasing its 2nd gen TPU and by god, it is powerful! Then there is this announcement of how a 1000 of these monsters are going to be lent for free. But let me get to that later.

Google always wanted to own the market, not just lead it and they have done so with Android and Chrome. Now it is laying its sights on the next big game in the tech arena, Artificial Intelligence. Google’s TPU is basically a cloud based supercomputer that can be used to train AI for whatever project you are working on. TPU works around TensorFlow, an in house machine learning framework developed by Google and then made open source in 2015. Part of the success story is within TensorFlow itself as it is the most widely embraced framework in the Tech community.

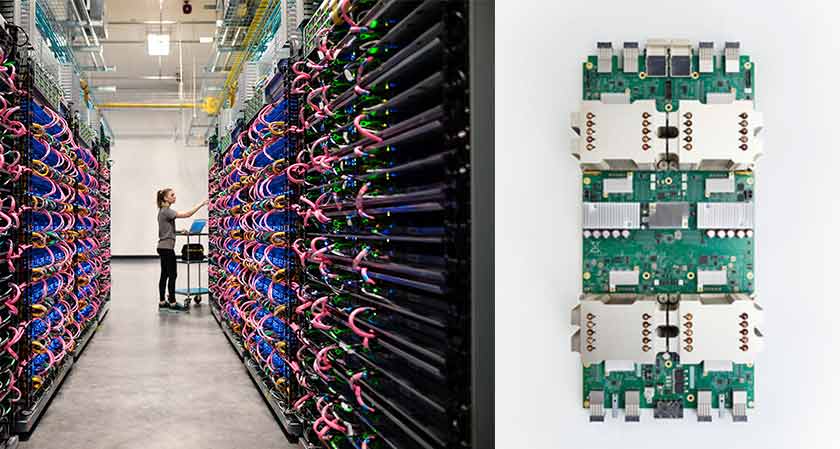

When it comes to power, it leaves its competitors gasping. Google wasn’t kidding around with this machine. A single TPU is capable of delivering up to 180 TerraFLOPS of computational speed and Google has found a way to rig these TPU’s together to form what they call a TPU pod which is similar to NVIDIA’s NVLink. So basically one server rack of TPU’s is like one big supercomputer capable of speeds of 11.5 PetaFLOPS.

You read it right before. They are giving a thousand of these for free. The TensorFlow Research Cloud program, as it will be called, will be application based and open to anyone conducting research, rather than just members of academia.

If the application is accepted, researches will get access to a cluster of thousand TPUs which can be used for AI training and Inference. The time however, is limited and will be allocated by Google depending on the amount and nature of the work. Each TPU also comes with 64GB of inbuilt memory.

All of this comes with a catch. Google is asking users to share their research in peer-reviewed publications and open-source code. If that level of openness isn’t your cup of tea, Google is also planning to launch a Cloud TPU Alpha program for internal, private sector, work.With all these coming in its hard to forget what else Google might have in store. The TPU’s come with a load of tools for researches continuing feed the community that drives it. These include a TensorFlowLite, and an API that will interface with future Smartphone chips that have been optimized to work with AI software. Developers can then use these to make better machine learning products for Android devices. Google’s AI Empire stretches out a bit further, and Google reaps the benefits.

Banking-and-finance

Artificial-intelligence

Travel-and-hospitality

Management-consulting

Banking-and-finance

Banking-and-finance

Food-and-beverage

Travel-and-hospitality

Food-and-beverage