Home Technology It services Sort Fact from Fiction, Adobe ...

It Services

CIO Bulletin

23 June, 2018

There are a lot of tools, including that of Adobe’s, which helps edit images and videos. Experts everywhere are increasingly worried about such powers of AI that tampers with the real details. As an antidote to such widely used techniques, and the social media’s lack of fact-checking procedures, Adobe is working on developing mechanisms that will automatically identify photoshopped images.

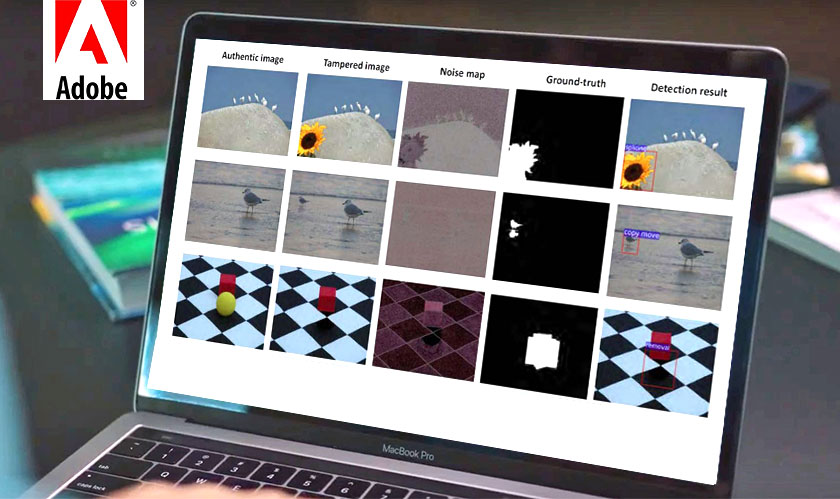

A spokesperson for Adobe said, “This is an early-stage project…but, in future…will help develop technology that helps monitor and verify the authenticity of digital media.” The new research paper illustrates how machine learning can be used to identify three common types of image manipulation: splicing, cloning, and removal.

The hidden layers of the image are used by experts to spot any tampering. The aforementioned sorts of edits leave behind disturbances like the variations in color and brightness created by image sensors (also known as ‘noise’). When you slice and splice together two different images, or when an image has copy-paste objects, it alters the background noise of the image. And such in-depth analysis using ML will help identify the fake.

However, the new invention, as of now, can only be used against the very basic alterations, but will progressively improve by learning new ways – feeding more training data. “The drawback of these approaches is that they are only as good as the training data fed into the networks…for now…less likely to learn higher-level artifacts like inconsistencies in the geometry of shadows and reflections,” says Hany Farid, a digital forensics expert.