Home technology mobile google introduces “faster voice typing†for Pixel phones

Mobile

CIO Bulletin

2019-03-13

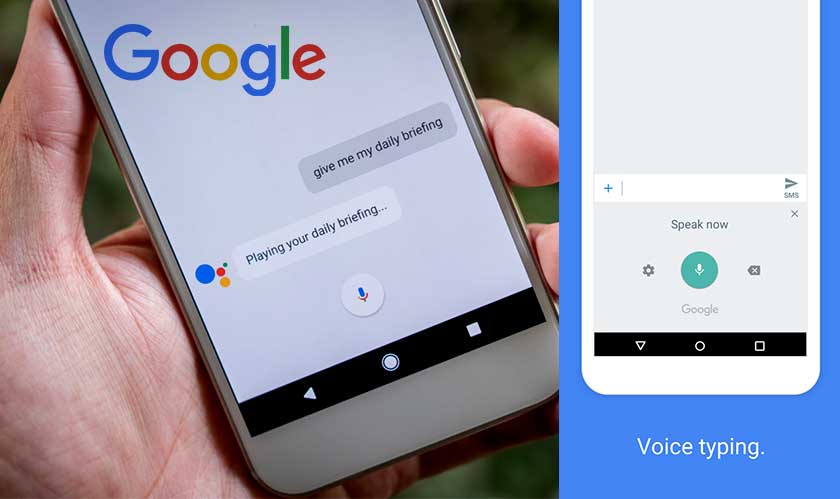

Google announces an “end-to-end, all-neural, on-device speech recognizer” for all Pixel phones. Gboard, Google’s cross-platform virtual keyboard app, end-to-end recognizer to power American English speech input on Pixel smartphones.

In simple words, Google has worked out a way to shrink the Speech-To-Text process so that it can be performed locally with the help of on-device machine learning algorithms. And the fruits of this labor are coming to Gboard. “This means no more network latency or spottiness — the new recognizer is always available, even when you are offline,” Google’s Speech Team wrote in a blog post. “The model works at the character level, so that as you speak, it outputs words character-by-character… typing out in real-time, and exactly as you’d expect from a keyboard dictation system.”

The speech you recorded by tapping the microphone icon was sent to Google's servers to be converted into text. This was needed as the size of the uncompressed speech recognition models used by Gboard was impractically large (about 2GB) to be stored on a smartphone. Transcribing your messages or queries this way can take anywhere from a handful of milliseconds to multiple entire seconds, or longer if your packets get lost in the journey.

But thanks to significant accuracy improvements with deep learning, Google has finally achieved “faster voice typing” that works offline. Google, at first, using the recurrent neural network transducer technology, trained a smaller, on-device model as effective as the server-based ones. The model, however, wasn’t quite small enough to store locally on mobile phones as it still took up to 450MB of space. Hence, Google further reduced the package size to only 80MB using a process called ‘model quantization.’

Google is "hopeful" that this functionality – which is yet to be released – will be available "in more languages and across broader domains of application" soon after.

Banking-and-finance

Artificial-intelligence

Travel-and-hospitality

Management-consulting

Banking-and-finance

Banking-and-finance

Food-and-beverage

Travel-and-hospitality

Food-and-beverage